Chamber 🏰 of Tech Secrets #14

Will AI change the game for the bad guys and their cyber attacks?

The Chamber 🏰 of Tech Secrets is open. I was in Silicon Valley again this past week for Plug and Play’s conference. I also closed on a house June 1 and moved, got engaged a few weekends ago, and have a backyard wedding that we’re planning in 3 weeks. Needless to say….things are busy here! I’ll be off to QConNY in Brooklyn and Monday and hope to see some of you there.

This week we’ll explore the impacts of Artificial Intelligence on the balance of power between the good guys and the bad guys, and the challenges enterprises are facing in “shifting left” as a result.

I appreciate you taking the time to read and I hope you enjoy! 🙏

Security incidents will hockey stick 🏒

Autonomous AI Agents are getting tremendous attention. One can imagine a not-too-distant future where complex tasks can be completed with human quality, or better. This has the potential to make our lives easier and more convenient and allow our efforts to be deployed to valuable work and meaningful relationships instead of ordinary, mundane tasks.

With every new technology that is intended for good, there are inevitable bad actors that will leverage the same positive advances for malicious purposes.

Most applications are in or going to the cloud so it makes sense for attackers to be focusing their energy there (over time).

Year | Cost($) | # of Breaches

2016 | $325m | 3,800

2017 | $5b | 6,200

2018 | $8b | 8,600

2019 | $11.5b | 11,000

2020 | $15b | 13,400

2021 | $18.5b | 15,800

2022 | $22b | 18,200

Estimated and possibly inaccurate / Source Google Bard

Software vulnerabilities linger and emerge

There are numerous ways that vulnerabilities can exist in production environments:

Accidental release of known vulnerabilities into production environments: deploying new or existing vulnerabilities in code or as dependencies can and does happen, including deploying older libraries that were previously remediated

Failure to update code and dependencies in long-lived, rarely touched applications: Many companies “finish” an application and then let it do its job for years or even decades. Many experienced this with the log4j vulnerability, where they had code bases that were aging and had to be touched for the first time in many years, likely by people who were not even familiar with the code base. I have even heard stories of organizations leaving those vulnerable libraries in place and just removing inbound internet access because they were not capable of making changes.

Failing to patch known vulnerabilities (60% of breaches): A large volume of companies do not have a software bill of materials and do not know what vulnerabilities exist in production (or at all). This failure leads to a huge number of exploits across the industry.

Bad configuration changes: Even with infrastructure as code in place (not a given), it is possible to make a network configuration mistake in a cloud environment and surface a resource that was intended to be private.

The model we use: shift left with shared responsibility

In Cloud Computing, we leverage a shared responsibility model.

In this model we shift a subset of security and operations concerns to a cloud provider, and assume responsibility for a smaller subset. This is a great improvement as the primitives for building systems are secured very well by world-class experts at cloud provider companies.

However, the shared responsibility model still leaves a lot up to enterprises:

Patching VM hosts, databases, etc.

Understanding software library dependencies

Developing least privilege IAM policies

Managing ingress / egress rules

Configuring infrastructure and application services

Identity and access management

Data backup and recovery

Compliance with industry regulations

In the last few years, there has been a push to “shift left” and move this work towards product teams to give them full responsibility for their solutions. In some cases this has been shifting to platform teams instead.

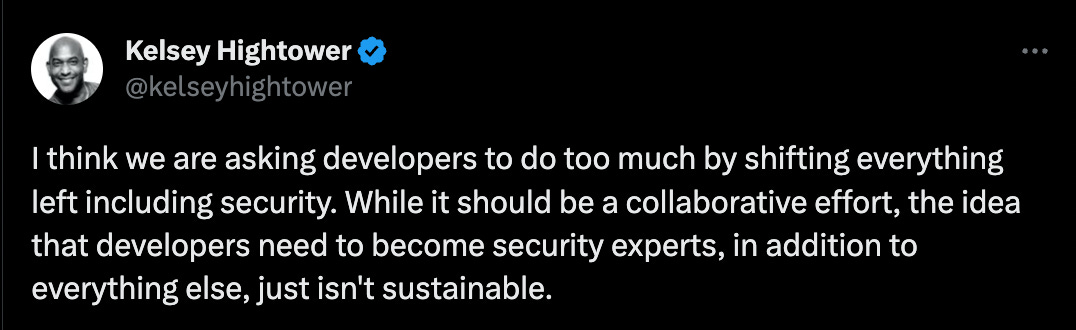

As usual, Kelsey says it well:

I suspect we will soon arrive at a future where every vulnerability that exists in an internet-reachable environment will be rapidly exploited by AI. What should enterprises do? Before we go there, let’s look at why I think this will occur.

The bad guys have what the good guys have.

The industry is benefiting from the cost of storage and compute going down over time while the capability of chips (CPU, GPU, TPU, etc) goes up. Unfortunately, the bad guys benefit from all of these factors. Here are a few thoughts on what they might be cooking up.

Language models to make phishing campaigns more convincing and successful. A huge percent of breaches still originate with phishing and/or stolen credentials.

Chaining language models and other AI capabilities to perform deeper probes into internet-exposed infrastructure. Port scans have been a constant for years, but I expect to see anything open get exercised in a much more rapid and much more sophisticated manner in the near term.

There are numerous open source vulnerability databases, such as Google’s OSV (new vulnerabilities for several ecosystems are aggregated with a freshness SLA of ~10 minutes). This helps the good guys find and remediate vulnerabilities, but also helps the bad guys know what is new and potentially exploitable.

Challenges with fixing issues

While attacks are likely to get more rapid and sophisticated, enterprises are struggling to respond effectively. I believe this is especially true in the DevOps world (magnified if there is an absence of platform teams) where there are tens-to-hundreds of environments where changes are frequently made. Handling these issues can be challenging for many reasons, whether they are handled by software engineers, security engineers, or a platform team.

Misaligned incentives: Product teams want to build products, and are generally praised for feature-delivery, not keeping their product secure. Yes, it must be secure to be useful, but the business does not appreciate this until the product is no longer available and it’s too late. Most software engineers want to have a secure product but would prefer that not require extra effort.

Skill gaps: In a lot of enterprises, a product team may not have the experience or skillset to adequately handle all security concerns across their application stack, cloud environment, and toolchain. Expecting the average engineer to be a master of one-to-many programming languages, cloud infrastructure, pipeline tools, databases, caches, Kubernetes, network configuration, good architecture practices, and security is a bit unrealistic.

Architectural Context: Architectural Context is critical for understanding true vulnerability surface and remediating issues. Team churn, poor documentation, absence of ADRs or similar, shared environments (cloud accounts, platforms) all make it difficult to understand the nuances of how a solution really works. These are solvable problems, but many organizations are spread too thin to invest in adequate tooling to solve them. Context is generally lacking in security tools today. Many organizations do not even have a handle on what applications and tools exist in their enterprise portfolio or understand where their business data resides.

Golden-Year Applications: Some applications are in their golden years. They serve their purpose well but no longer have active feature development. This means they are rarely updated and redeployed and are very likely to contain vulnerable dependencies or code.

Proliferation of security tools: I read that the average large enterprise has 30+ tools helping them with their security posture. This creates lots of cracks for things to fall through.

Signal in the noise: Security tools generate tons of “critical” alerts that aren’t critical. Architecture context is generally lacking. This causes numbness to alerts that are really critical and constant distractions for production teams.

Intent vs mistake: It is difficult to tell when a “misconfiguration” was intentional or accidental. Perhaps the s3 bucket is open to the world because of a poor IAM policy? Perhaps its open to the world because it houses a set of files intended to be shared publicly?

Increasingly technical business user base: GenZ grew up on the internet and they are eager to use technology in their work. This has led to a rise of “citizen development” or “business technologist” roles in organizations. A more technical user base likely means more systems and more software, but often driven by people with no software development background and no security knowledge. This creates an environment ripe for mistakes that lead to easy exploitation by the bad guys (and their AIs). It’s likely that most enterprises do not even know what their technology portfolio consists of anymore as their end users onboard new applications with click-thru agreements or use free productivity tools to assist with their jobs (without realizing the data leak implications).

The security tooling landscape is rapidly developing, but nobody has solved these challenges completely and effectively yet.

Will AI help?

Large Language Models tend to have good understandings of software, likely due to its strong deterministic structure. They are already very capable of performing tasks like classifications on system trees, explaining what code does, generating new code or configuration, transpilation from language A to B, and more.

Will we see a future where Large Language models and their other AI counterparts are on the offense and defense?

The Chamber 🏰 of Tech Secrets is closed. Thanks for reading and have a great week! 🙏

Great post. Congratulations on the engagement and upcoming wedding.💐

Marriage Teamwork, makes the dreamwork!