The Chamber 🏰 of Tech Secrets is open!

Housekeeping: The Chamber 🏰 of Tech Secrets Book 📚 Club has officially launched 🚀. All details, including sign-up, are here. Team Topologies has a commanding lead, but there is still a few hours left to vote. I was cheering for The 100 Page Machine Learning Book, but accidentally voted for Team Topologies. 🤣

Let’s dig into this week’s topic. AI is a raging 🔥 of possibility. I have never seen a hype cycle like it (and it’s more than mere hype). Is the world about to fundamentally change? What should enterprises be doing and thinking about?

Adopting AI in the Enterprise

Let’s do a rapid level-set on terms for those that are new:

Artificial Intelligence (AI): The development of computer systems and algorithms that can perform tasks that typically require human intelligence.

Generative AI: A class of artificial intelligence models and algorithms that are designed to generate new data based on the patterns and structures they have learned from existing data. These models can be used to create a wide range of outputs, such as text, images, music, and more.

Large Language Models (LLMs): A type of Generative AI model specifically designed to handle and generate human language. These models are trained on massive amounts of text data, often from diverse sources like books, articles, websites, and more. The size of these models, both in terms of the number of parameters and the amount of training data, allows them to learn complex patterns, relationships, and nuances in language.

ChatGPT: A textual, chat-based interface that proxies OpenAI’s GPT models. It provides a system prompt, text inputs/outputs, etc.. It has a growing list of features including plugins and real-time web search.

Throughout this post, it will be helpful to think about text generation since most readers will be familiar with OpenAI’s ChatGPT.

Pumping the brakes

What will it take for enterprises to leverage Generative AI? Not just use it, but in a way that is really game-changing. Like the hype is calling for...

The current generation of models, specifically GPT3.5+, have been extremely impressive in manifesting a new level of “intelligence” that has captivated the world (or at least Twitter) and made ChatGPT the fastest technology product to 100M users in history. Soon, every incumbent application will have AI embedded or face potential [quick] disruption. I won’t pretend to know if we are on an exponential or logistic curve with these developments, but we are definitely hockey-sticking right now. 🏒

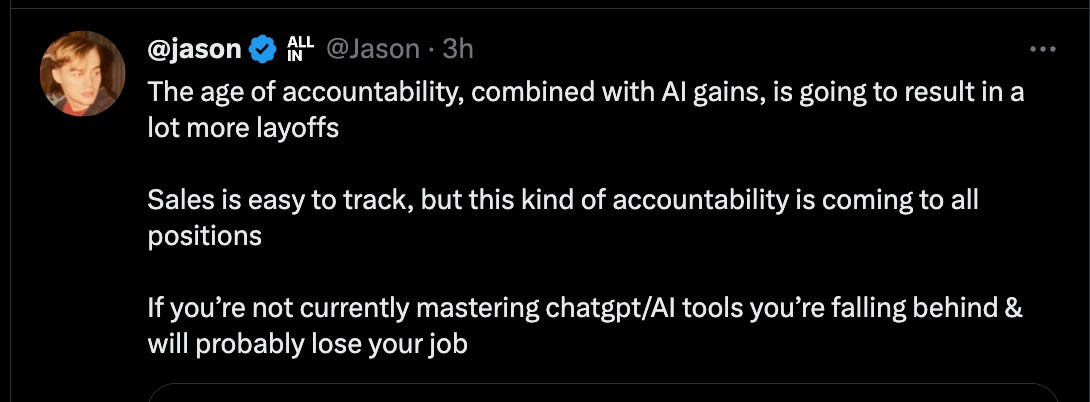

Is it “adopt AI or die” time? Is it a new age of accountability in the business world?

When thinking about enterprises, remember that incumbent technologies in are tough to disrupt. Traditional players are entrenched. They already have sales relationships. They can capitalize on a late-mover advantage. Enterprises often face high costs and long timelines for replacement (meaning migration or reimplementation) in production environments.

Really great products will overcome a lot of these hurdles, but it probably won’t be an easy road for most, and it will take more than just embedding a chat bot in the application. I think a whole new class applications (and businesses) that are possible because of AI are coming, but let’s save that monologue for another today. Today, let’s focus on enterprises.

What are enterprises thinking about?

This is not exhaustive, but here are some things enterprises are likely thinking about as they consider employing Generative AI to power their businesses.

Data / Intellectual Property (IP) leaking: one of the top concerns enterprises have is their employees dropping confidential or sensitive data into the friendly text box to refine, summarize, aggregate, generate. Today, OpenAI assures your data will not be used to train or improve their model when you use their API. Your data is your data. ChatGPT for business will make the same commitment to users via the chat interface (presumably with some sort of organizational login?). In the meantime, the free public (or plus member) version makes no such guarantees, and there is likely a lot of enterprise data leaking out of organizations around the world.

IP Ownership: How does ownership work for AI generated things? Think about source code. When you create new code from a model that has been trained on lots of other code you did not write and do not own, what does that mean? Is this different than a human reading stack overflow articles and copy/pasting sample projects that are then changed to meet their needs?

Security concerns: How do we combat the new class of security challenges that come with Generative AI services: prompt injections, prompt engineering, and model jailbreaking? While API surfaces are fairly small, inputs are currently unbounded (put stuff in the text box).

How will identity management and RBAC be solved? How do we maintain least privilege for AI functions such as executing actions (APIs) or retrieving data that may be sensitive in nature? Some sort of proxy layer (input / output sanitization, caching, etc.) seems likely, but its more to build and operate and creates an attractive new attack surface.

Customer experience concerns: Enterprises have a responsibility to protect their brand perception. Sometimes this means being careful about being “wrong” or responding with bias that offends customers (and then goes viral). For this reason, I expect the direct customer-facing uses of Generative AI to move slowly. Just like we see google moving cautiously to protect their brand image (which still was briefly tarnished by an incorrect Bard answer), other enterprises are doing and will continue to do the same… particularly for customer-facing use cases.

Incorrect answers → bad decisions: A lot of enterprises fear their employees blindly accepting responses from an AI model and then making incorrect decisions based on bad data.

New skills to develop: Generative AI is a new discipline to learn and there are few people with real experience applying the latest practices. There has been very little time for good (not to mention best) practices to emerge. [Yes, I know GPT models have been around for several years]. While OpenAI exposes their models through fairly straightforward APIs, a lot of organizations will have to build (or buy) the skills to use them effectively and effectively, particularly if they do not have an established software engineering culture. Some examples of things to learn are

Model selection

Model training (if required)

Model fine-tuning

Embeddings

Vector Databases

Various plug-in models

(Effective) Prompt engineering

Chaining

You can try to ask ChatGPT to do all of this for you… but good luck.

How do we keep the AI from escaping and taking over the company and then the world? Not everyone is asking that, but some people are.

11 questions to ask yourself today

Here are some questions I am thinking about related to AI:

What security, privacy, data / IP leak criteria do we need to create guidance around and make sure everyone is on the same page about? Encourage use within safe parameters. I’m not a huge fan of prohibiting usage altogether, which some companies are doing.

What models and vendors do we want to use? Do we have any reasons to train our own model (probably not for most)? Figure that out and get partners and contracts in place.

Can we train (or use embeddings / fine-tune) a model on our organizational data and make that data conversationally searchable for internal staff? How quickly? This satisfies the craving everyone has to use the new tools like GPT4 (alleviating some risk of data leak through public services) and also serves as a force multiplier for employees. It avoids the customer-facing risks.

What else can we do with LLM’s or other Generative AI tools? Explore plugins, chaining, prompt engineering with more structured inputs and outputs, etc. Explore LLM impact to existing products and services and un-served use cases.

Can we enable 10x developers by encouraging and enabling the use of code-assist tools (GitHub Co-Pilot-X, AWS Code Whisper, GPT models, etc.)? I believe the 10x developer is inevitable and wrote about it in Chamber of Tech Secrets #3.

What tools are emerging that can make LLM adoption easier for a large enterprise? Watch the emerging tooling market for platforms that help with a lot of these challenges.

What skills do we need to develop? Vector databases? Machine Learning? Legal knowledge? Rapid on-boarding of vendors? What skills will help us be prepared to execute when the time is right?

What projects can we do to learn? I plan to build some sort of agent to assist me with a percentage of my normal tasks just for the purposes of learning, but also in hopes to automate some portion of my current tasks.

What is the most important AI-enabled solution we should build / buy this year? Exit the hype interstate and consider what will really add value to the business and what you would do if you could only pick one thing. Also consider what existing work you have planned that AI might disrupt and consider reconsidering.

Where might the next wave of disruption come from? What will Apple, Meta and Amazon do? What will on-device or in-browser LLM’s mean for future applications and use cases? What are the impacts of WebGPU (launching in chrome this week)? Will we converge on a single model (GPT5?) or use many different models that are fine-tuned for various purposes? How do we make bets now to learn without getting over-committed while we’re in a state of rapid change?

What does the next-gen application look like? My thesis is that LLMs will be similar to how we see databases today: every application has one of some kind for some purpose. What techniques do we need to learn and master to use LLMs and other generative AI tools in our products?

Enterprise are big ships and take a long time to turn. If you are thinking “this time it’s different”, look at the last big disruptor: cloud computing. Years later, we still have huge percentages of enterprise workloads on-prem, not to mention on mainframes! Enterprises will adopt AI. There will be jobs that get replaced. There will be disruption: new business models, new players, new systems. There will be smaller engineering teams with more productive engineers. There will be personalized but auto-generated emails for sales purposes. There will be all kinds of unexpected disruptions. There will be new experiences and new value created. But it won’t all happen this week.

Do you think there will be a point where someone owns a powerful enough AI model (compared to competition or perhaps competing models of sufficient capability) that it becomes much more valuable to use it in house than to make it available to other people/enterprises?

Always enjoy this topic. Your considerations are over my head of course.

Absolutely, you blink and things change.